Every week I have some version of the same conversation. A director of IT or a VP of engineering tells me they’re deploying an AI model into a clinical workflow or a pharmaceutical manufacturing process. They’ve benchmarked it carefully. The model performs beautifully in testing. The business case is airtight. And then I ask the question that derails the whole timeline: “What does your Predetermined Change Control Plan look like?”

Silence.

The problem isn’t that these teams are reckless or technically incompetent — often the opposite is true. The problem is that the AI/ML validation discipline is not the same discipline as traditional software validation, and most teams are applying the wrong mental model to a fundamentally different challenge. They’re treating an ML model like a configurable software application when it’s actually closer to a biological process — one that adapts, drifts, and can behave differently in production than it did in your test environment.

In FDA-regulated environments — pharmaceutical manufacturing, clinical decision support, medical device software — getting this wrong isn’t just a compliance problem. It’s a patient safety problem. This article is my attempt to compress the hard lessons I’ve accumulated over two decades in enterprise IT, FDA/CGMP validated systems, and health IT into a framework your team can actually use.

The FDA’s AI/ML Regulatory Landscape Is Moving Fast (But Not Fast Enough for Your Timeline)

The FDA has been remarkably thoughtful and forward-leaning in its approach to AI/ML regulation — more so than most industry practitioners realize. The agency issued its AI/ML-Based Software as a Medical Device (SaMD) Action Plan in January 2021, which remains the foundational document for anyone deploying AI in a device context. In May 2023, the FDA released a Discussion Paper specifically addressing AI/ML in Drug Manufacturing, signaling that the pharma ops world should expect formal guidance to follow.

One of the most important concepts the FDA has introduced is the Predetermined Change Control Plan (PCCP). Traditional FDA-regulated software is validated to a specification that doesn’t change without going through a formal change control process. But ML models are designed to change — that’s the point. PCCPs allow sponsors to define in advance the types of changes that can be made to an adaptive algorithm, the methodology for assessing those changes, and the performance boundaries that trigger re-validation, without requiring full resubmission for each model update.

This is genuinely good regulatory policy. But here’s the challenge: most organizations haven’t built the technical infrastructure to execute a PCCP. A PCCP requires that you have model versioning, training data lineage tracking, automated performance monitoring against reference datasets, and documented rollback procedures — none of which come out of the box with most commercial AI platforms.

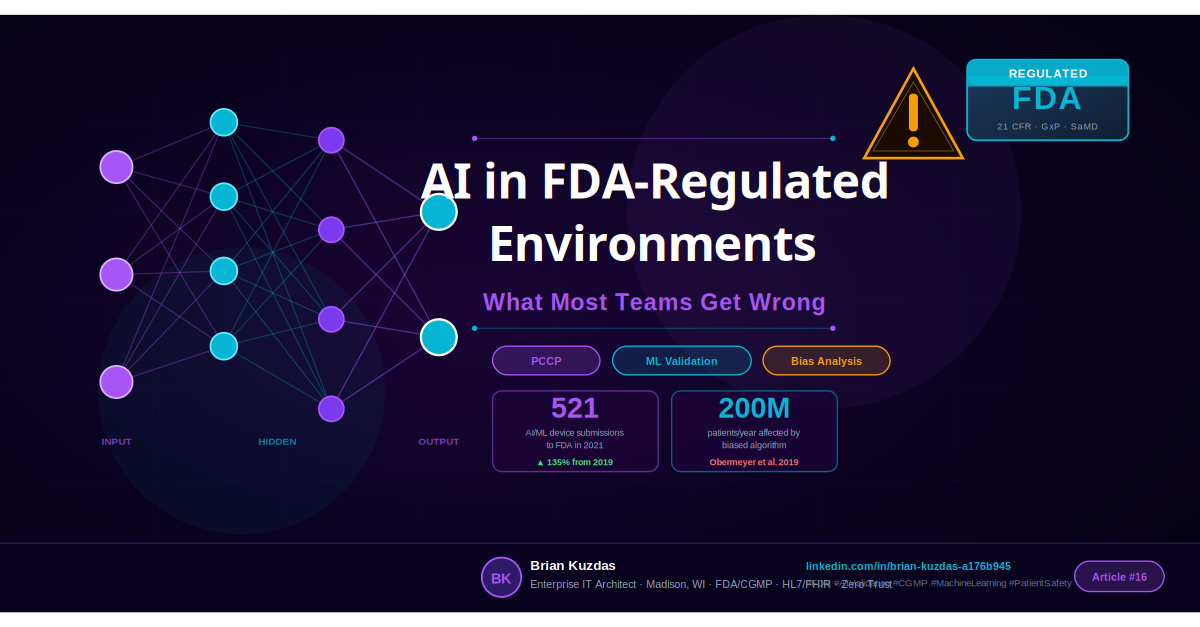

The scale of AI/ML activity in the regulated space is significant. The FDA received 521 AI/ML-enabled medical device submissions in 2021, up from 222 in 2019 — a 135% increase in just two years. The pipeline is not slowing down. That means the agency’s guidance is actively being stress-tested by real submissions, and the interpretive precedents being set today will shape the regulatory landscape for the next decade.

If you’re planning to submit an AI/ML-enabled device or incorporate AI into a GxP process, the time to engage regulatory affairs is before you finalize your model architecture, not after your model is trained and you’re 90 days from go-live.

Intended Use Is Everything — And Most Teams Define It Too Broadly

In FDA regulatory strategy, the intended use statement is not boilerplate. It is the cornerstone of your entire regulatory pathway. Get it wrong — too broad, too vague, or internally inconsistent — and every subsequent validation effort is built on sand.

Here’s a concrete example of where teams go wrong: A pharmaceutical company wants to use a machine learning model to “improve process quality.” That sounds reasonable. But “improve process quality” is not an intended use — it’s a business objective. Is the model being used as a process analytical technology (PAT) tool to monitor a continuous manufacturing process? Is it a clinical decision support (CDS) tool that recommends treatment adjustments? Is it a quality control classifier that flags deviations in product appearance? These three use cases have completely different regulatory pathways:

- Manufacturing process control AI falls under 21 CFR Part 211 (drug manufacturing), potentially triggering PAT framework requirements and process validation expectations under FDA’s 2011 Process Validation Guidance.

- Clinical Decision Support tools may or may not be regulated as medical devices, depending on whether they are “locked” or “adaptive” and whether they require clinician interpretation. The 21st Century Cures Act created a non-device CDS pathway, but it has specific conditions that must be met.

- SaMD (Software as a Medical Device) follows the International Medical Device Regulators Forum (IMDRF) SaMD framework, which the FDA has adopted, and is classified based on the significance of information provided and the healthcare situation.

The intended use statement forces you to answer the regulatory question before you build the system. If your team is saying “we’ll figure out the regulatory path after we’ve validated the model performs well” — reverse that immediately. The regulatory path determines what “performs well” even means.

One more critical point: the intended use statement locks down what the system is not for. Scope creep in AI systems — where a model trained for one purpose starts being used for adjacent purposes that weren’t validated — is one of the most common compliance failures I see in practice. A model validated to flag anomalous chromatography peaks is not validated to predict batch failure. They may seem related, but they’re different intended uses, different validation requirements, different risk profiles.

Why Validating an ML Model Is Nothing Like Validating Traditional Software

Traditional Computer Systems Validation (CSV) in GxP environments follows a well-established lifecycle: Installation Qualification (IQ), Operational Qualification (OQ), Performance Qualification (PQ), and ongoing change control. The logic is deterministic: for a given input, the system produces a defined output, and validation confirms the system does what the design specification says it should do.

ML models break this paradigm in several fundamental ways.

Non-determinism: Many ML architectures — particularly deep neural networks trained with stochastic gradient descent, or models that incorporate dropout layers — are not fully deterministic. The same input can produce slightly different outputs across runs. For regulators trained in CSV, where “same input = same output” is foundational, this is initially alarming. It doesn’t mean you can’t validate these systems, but it does mean you need to validate them differently: to a performance standard (the model correctly classifies ≥97% of inputs in the holdout set) rather than a deterministic specification.

Training data as part of the “product”: In traditional software validation, the source code and configuration are the product. In ML, the training data is also part of the product. The FDA’s 2023 Drug Manufacturing Discussion Paper explicitly calls out training data governance as a key concern. You need data lineage — the ability to trace every training example back to its source, provenance, and any preprocessing applied. You need version control for datasets, not just for code. You need to be able to re-train a model from scratch and demonstrate reproducible performance, which requires locking down not just the training algorithm but the training data.

Model drift: Software doesn’t degrade over time just because the world changes. A validated LIMS workflow from 2018 still works correctly today for the same inputs. But an ML model trained on manufacturing data from 2018 may perform poorly on data from 2024 if the process has changed, even subtly — different raw material suppliers, equipment maintenance patterns, environmental conditions, seasonal variation. This is called covariate shift and it’s one of the primary failure modes for production ML systems. Validation must include a monitoring protocol that detects drift and triggers re-evaluation against the original performance standards.

Statistical validation approaches: Because you’re validating to a performance standard rather than a specification, you need statistical rigor. This means proper holdout sets (data the model never saw during training), stratified cross-validation to ensure performance is consistent across subpopulations, confusion matrices that separately report false positive and false negative rates (because in a patient safety context, these have different consequences), and calibration analysis to ensure predicted probabilities reflect actual outcome frequencies.

The FDA has been clear that “the model was 95% accurate on the test set” is not a complete validation package. What was the composition of the test set? Was it representative of the intended use population? Was it truly held out, or did any of this data influence preprocessing decisions? Can you reproduce the test results? These are the questions that distinguish a robust ML validation package from a data science project report.

Algorithmic Bias: The Patient Safety Risk Hidden in Your Training Data

Algorithmic bias is not a social justice talking point — it is a patient safety risk that the FDA is actively tracking. If your training data systematically underrepresents certain demographic groups, your model will perform worse for those groups in production. In a clinical or manufacturing context, that means worse outcomes for real patients.

The most documented example is the pulse oximeter. Decades of clinical research have confirmed that pulse oximeters — devices that estimate blood oxygen saturation from a light signal transmitted through the fingertip — significantly overestimate oxygen levels in patients with darker skin tones. This isn’t an AI problem per se, but it’s a direct analog: a diagnostic tool trained primarily on one demographic population that produces systematically biased outputs for others. During COVID-19, this bias had life-or-death consequences, with darker-skinned patients’ actual hypoxia being masked by falsely elevated SpO2 readings.

In AI, the most frequently cited example of algorithmic bias in healthcare comes from a landmark 2019 paper in Science (Obermeyer et al., 2019). The researchers analyzed a widely-used commercial algorithm that was used to identify patients who would benefit from high-risk care management programs — affecting approximately 200 million patients per year in the US. The algorithm used healthcare spending as a proxy for health needs. Because structural inequities in the US healthcare system result in Black patients having lower healthcare spending than white patients with equivalent health burden, the algorithm systematically underestimated health needs for Black patients, resulting in Black patients being enrolled in care management programs at lower rates despite equivalent or greater need.

The FDA’s guidance on AI/ML devices explicitly calls for stratified performance analysis across demographic subgroups — including race/ethnicity, age, sex, and relevant clinical subgroups. This is not optional. Your validation package should report performance metrics broken down by these subgroups and demonstrate either that performance is comparable across groups, or provide a documented risk mitigation strategy for observed disparities.

The practical implication: audit your training data before you train your model. Understand its demographic composition. If it underrepresents any population that will be affected by the model’s outputs, you have a problem that no amount of training optimization will fix — you need more representative data, or you need to scope your intended use to exclude the underrepresented population explicitly.

The 5 Questions Your Compliance Team Will Ask (And How to Answer Them)

I’ve sat through a lot of AI/ML validation reviews. The compliance questions are largely the same across organizations. Here’s how to answer them.

1. What is the intended use?

This needs to be a single, unambiguous statement that a regulator could read without needing follow-up clarification. It should specify: the target population (patients, batches, processes), the specific output (classification, prediction, recommendation), the clinical or operational context, and the role of a human in the decision loop. Lock this down before you write a line of code.

2. Who validates model changes, and how are they classified?

You need a change classification taxonomy before you go live. At minimum: Major changes (new training data source, new model architecture, changes that expand the intended use) require full re-validation and potentially regulatory notification. Minor changes (re-training on updated data within the same distribution, hyperparameter tuning within validated bounds) require documented evaluation against performance benchmarks and change control approval. Cosmetic changes (code refactoring that doesn’t affect model logic, UI updates) require standard change control but not re-validation. The PCCP is the FDA’s framework for pre-approving this classification scheme.

3. What is your training data governance?

Answer must include: where the data came from (source systems, extraction queries, date ranges), how it was labeled (who labeled it, inter-rater reliability metrics if applicable), what preprocessing was applied (normalization, outlier removal, imputation), what version of the dataset was used for training, and how the dataset is stored and protected from unauthorized modification.

4. How do you monitor for model drift?

You need a reference dataset — a locked, representative sample of data from your validation period — and a monitoring protocol that periodically evaluates production model outputs against expected distributions. At minimum, monitor input feature distributions (to detect covariate shift) and output distributions (to detect performance degradation). Define the threshold that triggers a re-evaluation and document who is responsible for acting on monitoring alerts.

5. What is the rollback plan?

Every production ML deployment needs a model registry that maintains prior versions, version pinning for production deployments, tested rollback procedures, and a fallback workflow for the scenario where the AI system is unavailable. The rollback plan isn’t an afterthought — it’s evidence that you’ve genuinely thought about what happens when the model fails.

A Practical Framework for AI in GxP Environments

Having worked across pharmaceutical, device, and healthcare IT environments, here is the deployment progression I recommend for organizations new to AI in regulated contexts.

Stage 1: Process Analytics Start with AI systems that provide insights to humans but don’t control processes or influence clinical decisions. Statistical process control dashboards, predictive maintenance alerts, laboratory data trend visualization. These are typically not regulated as medical devices and have more straightforward validation requirements. Build your MLOps infrastructure here — data pipelines, model registry, monitoring dashboards, audit trails.

Stage 2: Quality Monitoring Advance to AI systems that automatically flag deviations or anomalies for human review. Batch record review assistance, specification exceedance detection, complaint trend analysis. The human remains in the decision loop, which reduces the regulatory burden, but the outputs are now directly connected to GxP decisions. This is where your training data governance and bias analysis practices become essential.

Stage 3: Process Control AI systems that participate in closed-loop control of manufacturing processes. PAT-based feedback control, real-time release testing decision support, continuous manufacturing process optimization. These require the most rigorous validation, the clearest intended use statements, and the most robust monitoring protocols. Engage FDA reviewers early through the Q-Submission (pre-Sub) process if you’re in the device space.

Stage 4: Clinical Decision Support AI systems whose outputs inform clinical decisions — patient triage, diagnostic imaging interpretation, treatment recommendation. The regulatory scrutiny here is at its highest. In this zone, the risk of algorithmic bias has direct patient safety consequences. The FDA’s guidance on non-device CDS carve-outs under the 21st Century Cures Act has specific conditions; if your system doesn’t meet them, you’re looking at a SaMD regulatory pathway.

The critical message: do not try to start at Stage 4. Build the organizational and technical infrastructure in Stages 1 and 2, learn how regulators respond to your validation packages, and develop internal expertise before you put an AI system directly into the patient care pathway.

Bottom Line

After decades of validating complex systems in regulated environments, here is what I know to be true about deploying AI in FDA-regulated contexts:

-

Define intended use before building anything. The regulatory pathway — and therefore the validation requirements — flows from the intended use statement. Vague intended use = validation chaos later.

-

Build your MLOps infrastructure before your first model. Model registry, data versioning, automated performance monitoring, audit trails — these are not nice-to-haves. They are the substrate on which compliant AI operates.

-

Write your PCCP before your first model update. The Predetermined Change Control Plan is what separates organizations that can iterate quickly on their AI systems from organizations that are perpetually stuck re-validating. Design it early.

-

Audit your training data for demographic bias. Before training, not after. The Obermeyer 2019 result — 200 million patients affected by a biased algorithm — should be a permanent fixture in your risk assessment process.

-

Stratified performance analysis is not optional. Report your model’s performance broken down by race/ethnicity, age, sex, and relevant clinical subgroups. If there are disparities, document them and address them.

-

Engage regulatory affairs the day you decide to build. Not the day before you submit. The FDA’s current guidance has enough interpretive flexibility that early engagement can dramatically simplify your pathway. Late engagement turns that flexibility into a compliance gap.

AI has genuine transformative potential in pharmaceutical manufacturing, clinical operations, and medical device technology. The organizations that will capture that potential are not the ones that move fastest. They are the ones that build the regulatory and technical infrastructure that allows them to move confidently — validated, monitored, and prepared to adapt safely over time.

References

- FDA. “Artificial Intelligence/Machine Learning (AI/ML)-Based Software as a Medical Device (SaMD) Action Plan.” January 2021. https://www.fda.gov/media/145022/download

- FDA. “Discussion Paper and Request for Feedback: Artificial Intelligence and Machine Learning in Drug Manufacturing.” May 2023.

- FDA. “Predetermined Change Control Plans for Machine Learning-Enabled Medical Devices: Guiding Principles.” October 2021.

- Obermeyer Z, et al. “Dissecting racial bias in an algorithm used to manage the health of populations.” Science. 2019;366(6464):447-453.

- EU Artificial Intelligence Act. Regulation (EU) 2024/1689. June 2024.

- ICH E6(R2) Good Clinical Practice. 2016.

- FDA Center for Devices and Radiological Health. “Artificial Intelligence and Machine Learning Performance Assessment and Monitoring.” 2023.

#FDA #AIValidation #MedicalDevices #CGMP #MachineLearning #PatientSafety #LifeSciences #RegTech