By Brian Kuzdas | Enterprise IT Architect | Madison, WI

The pharmaceutical company releases a critical update to their manufacturing execution system. Before that update can go live, it must traverse a gauntlet: User Requirements Specification, Functional Requirements Specification, Design Specification, coding, Installation Qualification, Operational Qualification, Performance Qualification, and a final validation summary report reviewed by three departments and two external consultants. Total elapsed time: fourteen months.

Meanwhile, their cloud-native competitors have pushed 847 deployments in that same window.

That gap isn’t just a competitive inconvenience. It’s becoming a patient safety issue. Slow software cycles mean slower detection of defects, longer exposure to vulnerabilities, and greater distance between the moment a problem is introduced and the moment it’s discovered. The regulatory frameworks designed to protect patients are, paradoxically, creating conditions that can undermine the quality they were meant to ensure.

The answer isn’t to abandon GxP validation. The answer is to modernize it — and DevSecOps is how you do that.

I’ve spent over two decades in enterprise IT for regulated industries. I’ve lived through the validation waterfall, argued with auditors about audit trails, and watched talented development teams buried under document management systems that nobody truly trusted. Here’s what I’ve learned about making DevSecOps work in regulated environments — practically, compliantly, and sustainably.

The GxP Validation Waterfall: How Compliance Became a Bottleneck

The traditional validation lifecycle for a computerized system in a GxP environment follows a well-established sequence: User Requirements Specification (URS), Functional Requirements Specification (FRS), Design Specification (DS), development, Installation Qualification (IQ), Operational Qualification (OQ), Performance Qualification (PQ), and a final Validation Summary Report (VSR). The documentation trail for each step must be complete, reviewed, approved, and cross-referenced before advancing.

For a moderately complex system update, this process typically runs 6 to 18 months. For major implementations or platform migrations, 24 to 36 months is not unusual. And if a critical defect surfaces late in that cycle, the entire chain may need revision — adding months and compounding costs.

This approach was engineered for an era when software was deployed as physical media, hardware and software were purchased together, and “change” was a rare, expensive event requiring committee approval. In 2024, that model is a straitjacket.

The DORA data is stark. According to the 2023 State of DevOps Report (Google DORA), elite-performing DevOps organizations deploy to production 973 times more frequently than low-performing organizations. Their lead time for changes is less than one hour. Their change failure rate is one-fifth that of low performers. Their mean time to restore service from a failure is under one hour versus multiple days.

The validation waterfall doesn’t just slow you down — it creates a false sense of quality. A fourteen-month validation cycle doesn’t mean the software is fourteen months better than one validated in three months. It means the documentation is older, the environment has changed more in the interim, and the team that wrote the requirements is no longer the team delivering the code.

The real quality driver in modern software is continuous testing, fast feedback loops, and the ability to detect and fix defects before they reach production. That is the core proposition of DevSecOps — and it is entirely compatible with GxP compliance when implemented thoughtfully.

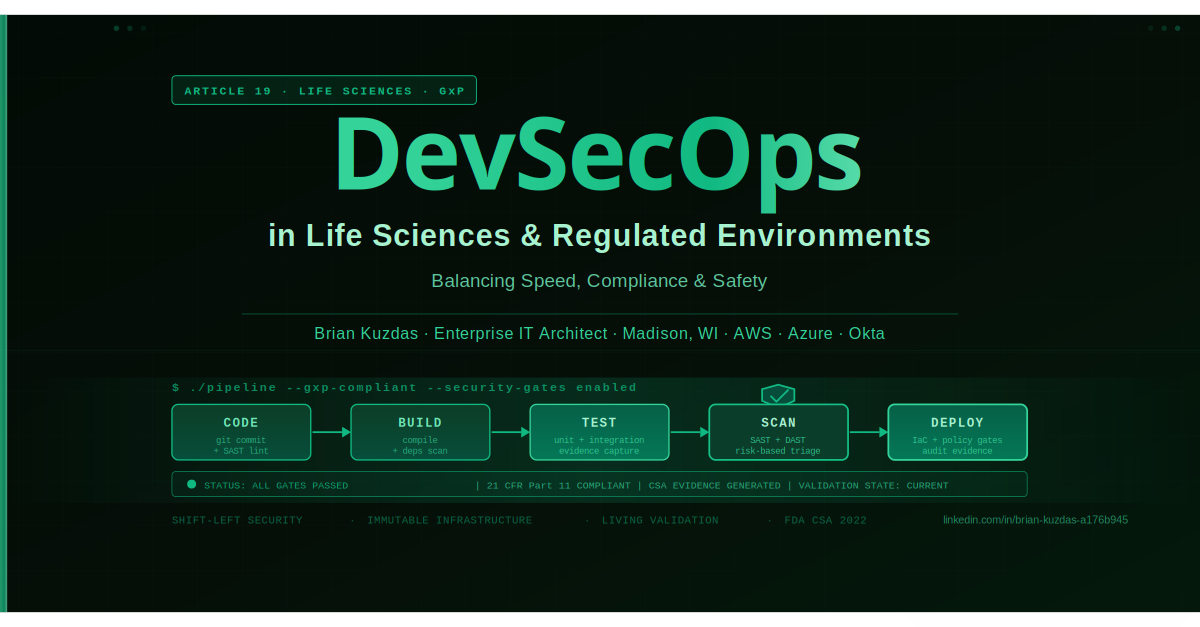

Shifting Security Left: Integrating Security Into CI/CD Without Breaking Validation

”Shift left” means moving security earlier in the development cycle — into the IDE, into pull request checks, into the automated build pipeline — rather than treating it as a pre-release gate. In regulated environments, this isn’t just good security hygiene; it is a compliance accelerator.

Static Analysis in Pull Request Gates

Every pull request triggers SAST scanning before code is merged. Developers see security findings in real time — not six months later during a pre-validation security review. Tools like SonarQube, Checkmarx, and Veracode integrate directly with GitHub, GitLab, and Azure DevOps. Configure quality gates: high-severity findings must be triaged or remediated before merge is permitted.

The validation implication: SAST results become validation evidence. Configure your pipeline to generate findings reports as signed, timestamped artifacts. Your computer validation documentation now has automated, version-linked evidence of security review for every commit. An auditor asking “how was security reviewed?” receives a URL to the pipeline run, not a six-month-old spreadsheet.

Automated Compliance Checks in the Pipeline

Infrastructure-as-code (IaC) with automated policy checks — Open Policy Agent, HashiCorp Sentinel, AWS Config Rules — means your infrastructure is validated at the definition level, before deployment. No more manual qualification scripts asking whether a server has the correct OS configuration; the infrastructure cannot be deployed if it violates policy.

For GxP environments, this is a fundamental shift. Your IQ protocol no longer documents what a human observer verified. It references the Terraform plan that proved the infrastructure was correctly configured before it was ever instantiated — with the commit hash, the policy version, and the approver identity as permanent, immutable record.

Immutable Infrastructure for Reproducibility

GxP validation requires that you can reproduce a validated state. Immutable infrastructure — containers, golden AMIs, baked VM images — means your deployment artifacts are versioned, cryptographically signed, and reproducible. The validated “thing” is the container image with a specific SHA-256 digest, not the running server that might have drifted since qualification.

Validation Evidence Generation as Code

The most transformative shift: stop generating validation documentation by hand. Your pipeline automatically generates test execution reports, code coverage metrics, dependency vulnerability scans, container image vulnerability assessments, and infrastructure configuration snapshots — each artifact cryptographically linked to the commit that produced it, automatically archived, and permanently auditable.

SAST and DAST in Regulated Pipelines: Practical Implementation

Static Application Security Testing (SAST) and Dynamic Application Security Testing (DAST) are not new concepts. But deploying them in regulated environments requires discipline — especially around managing the false positive problem that plagued early adopters.

SAST Implementation in CI/CD

SAST tools analyze source code without executing it. In a GxP CI/CD pipeline, three tools dominate:

- SonarQube — Open-source/commercial, integrates with most CI systems, provides quality gates, OWASP vulnerability coverage, and historical trending. Excellent for organizations new to shift-left security. The Community Edition is free; Enterprise adds branch analysis and reporting.

- Checkmarx — Enterprise-grade, with strong presence in regulated industries. Compliance reporting aligned to HIPAA and FDA contexts. Supports incremental scanning for large codebases, which is critical when you need fast PR feedback.

- Veracode — SaaS-based, strong compliance reporting, widely accepted in FDA-regulated contexts. The Veracode Policy feature allows organizations to define acceptable risk thresholds that align with their GxP risk classification.

The False Positive Problem

Here is the honest truth about SAST in regulated pipelines: on a large legacy codebase, you may see hundreds of findings on day one. You cannot risk-accept everything. You cannot chase everything. And you absolutely cannot have a policy of “fix everything before merge” when your backlog is measured in weeks.

The solution is a risk-based triage workflow embedded in your pipeline. Define tiers: critical and high findings must be remediated or formally risk-accepted before merge. Medium findings are triaged within a sprint. Low findings are tracked as technical debt. The risk acceptance record — who accepted, why, what compensating controls exist — is generated as a pipeline artifact and becomes part of your validation package.

DAST in Pre-Production

Dynamic Application Security Testing runs against a live application, testing it from the outside. In a regulated pipeline, DAST belongs in your pre-production environment, running automatically after every deployment to staging:

- OWASP ZAP — Free, powerful API for pipeline integration. Run passive mode during staging deployments for zero-interference reconnaissance; run active mode on demand against dedicated test instances.

- Burp Suite Enterprise — Commercial, strong reporting, CI/CD integration API. The audit trail it generates aligns well with IQ/OQ evidence requirements.

A DAST scan result tied to a specific build artifact in a specific environment on a specific date provides evidence of security testing against a running application — evidence that traditional manual testing protocols rarely produced at this level of specificity.

The FDA’s Evolving Thinking: From CSV to CSA

The most significant development in GxP software quality in a decade: the FDA’s Computer Software Assurance (CSA) framework.

Published as a draft guidance document in September 2022 by CDER and CBER, CSA represents a fundamental paradigm shift in how FDA thinks about software quality in regulated environments. The core principles:

Risk-Based Proportionality: Not all software requires the same level of validation rigor. A manufacturing execution system controlling API synthesis warrants substantially more verification than a spreadsheet used to track internal meeting notes. CSA formalizes this common-sense approach with a structured risk categorization model.

Intended Use Drives Verification: The extent of verification activities should be proportional to the intended use and the risk the software poses to product quality and patient safety. This is not new in spirit — GAMP 5 has articulated this for two decades — but CSA makes it the explicit organizing principle rather than a footnote.

Critical Thinking Over Scripted Documentation: FDA is explicitly moving away from prescriptive documentation requirements. The 100-page Installation Qualification protocol with manual screenshots of configuration screens is not the point. CSA says: demonstrate that critical thinking was applied, that the system does what it needs to do, and that you have evidence. The form of that evidence is flexible.

Testing Is Verification: Traditional paper-based CSV often used documentation as a proxy for testing. Lengthy protocols existed to describe the testing that would occur, not because the description itself provided quality assurance. CSA shifts emphasis to the tests themselves: automated, repeatable, evidence-generating tests are more valuable than documented manual test scripts.

The Case for Quality Program

The FDA’s Case for Quality initiative (within CDER’s Office of Pharmaceutical Quality) has been piloting collaborative approaches with pharmaceutical companies to demonstrate that quality outcomes can be achieved through modernized practices. Consistently, participating companies that adopted continuous process verification and modern software quality practices reduced defect rates and improved audit outcomes. They did not find that moving faster created more problems.

The “Living Validation” Concept

Traditional CSV produced a point-in-time validation: the system was validated as of date X in environment Y with software version Z. Any change potentially invalidated that state and required re-validation.

CSA enables a “living” validation model:

- Validation evidence accumulates continuously via automated testing

- Changes trigger incremental re-validation of affected functionality only

- The “validation state” reflects the current system, not a historical snapshot

- Regulatory submissions can reference continuous quality evidence rather than periodic qualification campaigns

GAMP 5 Second Edition (2022) and its appendices — particularly Appendix M on Agile development — provide the industry framework for implementing living validation alongside modern development practices. The ISPE guidance explicitly endorses sprint-based verification, automated regression suites, and continuous documentation generation.

Practical Patterns for GxP-Compliant CI/CD Pipelines

Here are the patterns that actually work in regulated environments. I’ve implemented variations of these across pharmaceutical, biotech, and medical device organizations with quality systems ranging from ISO 13485 to 21 CFR Part 820.

Pattern 1: Infrastructure as Code with Policy Guardrails

Terraform Configuration → terraform plan

→ OPA Policy Evaluation → PASS/FAIL

→ terraform apply (only if PASS)

→ Deployment Record (plan output + policy result + commit hash)

All infrastructure defined in Terraform or Pulumi. Open Policy Agent rules encode your SOPs and configuration standards in machine-readable policy. Every infrastructure change is a git commit with an author, timestamp, and diff. Your IQ protocol references the commit hash, not a manual configuration checklist.

Pattern 2: Automated Test Evidence Capture

Your pipeline generates the following artifacts per build, automatically archived with run ID and commit hash:

- JUnit/NUnit/pytest test reports (XML) — timestamped, signed

- Code coverage reports (HTML + JSON) — linked to specific commit

- Dependency vulnerability scan (JSON output from OWASP Dependency-Check or Snyk)

- Container image scan results (Trivy or Grype)

- Infrastructure compliance report (OPA evaluation output)

These are your OQ evidence artifacts. No manual screenshots. No templated documents filled in by the developer who wrote the code being tested.

Pattern 3: Electronic Deployment Records as Pipeline Artifacts

In manufacturing, every batch has a batch record. Your software deployment should too. Define a pipeline step that generates a deployment manifest:

Deployment Record = {

artifact_id: "myapp:2.4.1-sha256:abc123",

deployment_target: "prod-us-east-1",

deployed_by: "pipeline-service-account",

approved_by: ["[email protected]", "[email protected]"],

test_evidence: "https://artifactory.company.com/runs/847",

scan_results: "PASS (0 high, 3 medium triaged)",

timestamp: "2024-11-15T14:32:11Z"

}

Stored immutably in your artifact repository (Artifactory, Nexus) with a permanent, content-addressable URL. Referenced in your change control system.

Pattern 4: Audit Trail via Git History and Pipeline Logs

21 CFR Part 11 requires a computer-generated, time-stamped audit trail that records operator actions. Modern DevOps gives you a better audit trail than any LIMS or legacy DMS:

- Every code change: who changed what, when, and the linked change request (enforced by branch protection)

- Every build: which code, which tools, which configuration

- Every deployment: which artifact, to which environment, approved by whom, with what test evidence

- Every configuration change: IaC diff, policy evaluation result, approver

Structure this properly, and you have an audit trail that FDA investigators actually respect — because it’s complete, automated, and cannot be retroactively edited.

Pattern 5: Approval Gates as Pipeline Code

Promotion from test to staging to production requires explicit approval. Implement this in your pipeline as protected environment rules with required reviewers. The approval record is a timestamped event in your VCS with the approver’s cryptographic identity. That is your change control electronic signature — 21 CFR Part 11 compliant without a separate eSig system.

Making the Business Case for DevSecOps Investment

This is where most DevSecOps advocates lose their audience. The technology makes sense. The compliance alignment is there. But the business case needs to be made in CFO-legible language — and the numbers are compelling when you construct them correctly.

The Cost of Late-Stage Defect Detection

Research from the Systems Sciences Institute at IBM found that fixing a defect in production costs approximately 100x more than fixing the same defect during the design phase. The multiplier is higher in regulated environments:

- A production defect in a GxP system may trigger a product recall or market withdrawal

- FDA notification may be required under 21 CFR Part 314.81 (Field Alert Reports)

- Full re-validation is required before deploying the fix — at the same cost as the original validation

- Commercial impact of a manufacturing system outage can exceed $1 million per hour for some commercial-scale drug product operations

- Potential consent decree or warning letter risk if the defect indicates systemic quality failure

Every SAST finding caught in a pull request review is a defect that was not allowed to mature into a production incident. The pipeline pays for itself.

DORA Metrics as ROI Drivers

The 2023 DORA State of DevOps Report directly correlates DevOps maturity with business outcomes. Organizations in the elite performance tier are:

- 3.1× more likely to meet or exceed organizational performance targets

- 2.4× more likely to meet or exceed profitability goals

- 53% less change failure rate than low performers

- 0.9% change failure rate vs. 46-60% for low performers

For a life sciences company, moving from low to elite DevOps performance on a single product’s software supply chain commonly represents months of accelerated time-to-market — which, for a drug product generating $50M/year in revenue, translates directly to dollars.

The Re-Validation Cost Argument

Traditional CSV environments avoid changes because every change triggers re-validation costs. This accumulates technical debt. When the debt comes due — a vendor drops support for a deprecated platform, a critical vulnerability requires emergency remediation, or a regulatory inspection finds systemic deficiencies — the accumulated re-validation cost is enormous.

A DevSecOps approach with continuous, automated validation evidence makes changes cheaper because re-validation is largely automated. A three-hour automated pipeline run replaces weeks of manual testing and documentation preparation. Organizations routinely report 70-80% reduction in validation effort per release cycle after implementing automated evidence generation.

Quantifying the Business Case

Structure the ROI argument in four components:

- Current state cost baseline: Labor hours for manual validation activities × burdened cost per hour × annual release frequency

- Incident risk reduction: Historical or industry average probability of production defect × estimated cost per incident (recall, response, re-validation, commercial impact)

- Time-to-market acceleration: Average release cycle reduction × commercial value of earlier market access

- Compliance risk reduction: Probability of audit finding × estimated cost of a Form 483 observation remediation or Warning Letter response

For most mid-size pharma organizations operating traditional CSV, the business case for a DevSecOps transformation typically yields a 3- to 5-year ROI of 400-600%. The numbers make the case.

Bottom Line

DevSecOps in life sciences isn’t about moving fast and breaking things. It’s about moving deliberately, automating the evidence that quality was assured, and building security into the development process rather than bolting it on at the end.

Practical takeaways:

-

Start with the CSA mindset, not the CSV mindset. FDA’s Computer Software Assurance draft guidance is your regulatory permission slip for modernization. Read it, share it with your QA leadership, and build your transformation case on it.

-

Automate evidence generation before you change your validation process. Start capturing automated test results, scan reports, and deployment manifests as pipeline artifacts. You’re not changing your validation — you’re improving the evidence quality.

-

Deploy SAST in observation mode first. Don’t block merges on day one. Run SAST scans, capture results, build your baseline and triage backlog. Then progressively enforce. This avoids the culture shock that kills adoption.

-

Define your immutable deployment artifact. Whatever you deploy — container image, AMI, package — give it a content-addressable identity (SHA-256 digest). Your IQ validates the artifact, not the running instance.

-

Bring your QA team into the pipeline design from day one. The most successful DevSecOps transformations in regulated environments treated QA as architects of the quality system, not gatekeepers reviewing outputs. When QA owns the evidence generation requirements, you get quality documentation that actually satisfies auditors.

-

Pilot on a lower-risk system. Don’t start with your commercial drug product manufacturing execution system. Start with a supporting system — LIMS, internal portal, development tool. Build organizational confidence, demonstrate the audit trail, earn the trust of your quality organization.

The FDA is giving you permission to do this better. The technology exists. The patterns are proven. The only remaining barrier is organizational will.

References & Citations

-

FDA Computer Software Assurance for Production and Quality System Software — Draft Guidance, September 2022. U.S. Food & Drug Administration, Center for Drug Evaluation and Research / Center for Biologics Evaluation and Research. https://www.fda.gov/regulatory-information/search-fda-guidance-documents/computer-software-assurance-production-and-quality-system-software

-

GAMP 5: A Risk-Based Approach to Compliant GxP Computerized Systems, Second Edition — International Society for Pharmaceutical Engineering (ISPE), 2022. Includes Appendix M: Agile Approach.

-

2023 Accelerate State of DevOps Report — Google/DORA Research Program. https://dora.dev/research/2023/dora-report/

-

FDA Case for Quality — CDER Office of Pharmaceutical Quality, Continuous Program. https://www.fda.gov/drugs/development-approval-process-drugs/case-quality

-

OWASP Software Assurance Maturity Model (SAMM), v2.0 — OWASP Foundation. https://owaspsamm.org/

-

21 CFR Part 11 — Electronic Records; Electronic Signatures — FDA Regulation. https://www.ecfr.gov/current/title-21/chapter-I/subchapter-A/part-11

-

21 CFR Part 314.81 — Field Alert Reports — FDA Regulation.

-

Systems Sciences Institute (IBM) — Cost of Software Defects by Development Phase. Referenced in: Pressman, R.S., Software Engineering: A Practitioner’s Approach (various editions).

-

Accelerate: The Science of Lean Software and DevOps — Forsgren, N., Humble, J., & Kim, G. IT Revolution Press, 2018.

-

ISPE Good Practice Guide: Process Performance and Product Quality Monitoring — ISPE, related to continuous process verification.

#DevSecOps #LifeSciences #GxP #FDACompliance #CICD #CloudSecurity #Pharma #DevOps #ComputerSoftwareAssurance #EnterpriseIT #ZeroTrust #CISOInsights #Biotech #MedDevice #Validation