Healthcare data is the most valuable data on earth. It sells for 10x more than a credit card number on the dark web. Yet most HL7 and FHIR implementations treat security as an afterthought.

I’ve spent years designing and building clinical data integration systems — including a multi-protocol HL7 ingestor platform that handles everything from legacy ASTM instruments to modern FHIR R4 endpoints. In that time, I’ve seen the same security mistakes repeated across organizations of every size.

The problem isn’t that healthcare IT teams don’t care about security. They do. The problem is that they focus on the wrong things.

Here’s what gets missed — and what to do about it.

Mistake #1: Treating the HL7 Pipe as a Trusted Channel

The single most common assumption I see is this: “The data comes from inside our network, so it’s safe.”

This is the perimeter fallacy applied to clinical data integration — and it’s dangerous.

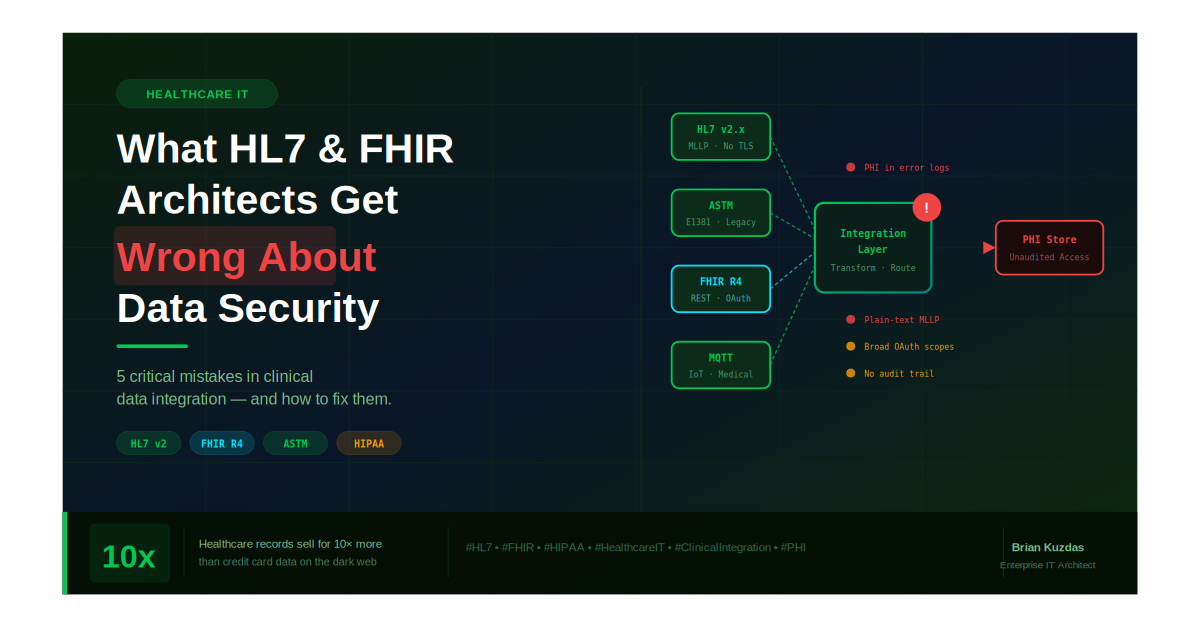

HL7 v2.x messages travel over MLLP (Minimal Lower Layer Protocol) — a protocol designed in the 1980s that has no native encryption, no authentication, and no integrity checking. By default, an HL7 message sent across your internal network is transmitted in plain text.

What this means in practice:

- Any device on the same network segment can intercept lab results, ADT events, and order messages

- A compromised internal system can inject malicious or falsified HL7 messages

- There is no built-in mechanism to verify the message came from the system it claims to have come from

The fix: Wrap every MLLP connection in TLS. Enforce mutual TLS (mTLS) where the server and client both authenticate. Treat every HL7 pipe as an untrusted channel until proven otherwise.

Mistake #2: Ignoring the Audit Trail

Under HIPAA’s Security Rule, covered entities must implement hardware, software, and procedural mechanisms to record and examine access and activity in information systems containing PHI.

In plain English: you must be able to prove who accessed what, when, and what happened to it.

Most HL7 integration engines log transactions — message received, message sent, acknowledgment returned. That’s not an audit trail. That’s a delivery receipt.

A true audit trail answers:

- Which user or system triggered this message?

- What was the exact content of the message at each hop?

- Was the message modified in transit or during transformation?

- Who had access to the raw PHI payload?

I built full message logging — original payload, transformed payload, processing timestamp, source system, destination system — into my HL7 platform from day one. Not because it was required for the MVP, but because without it, you cannot investigate an incident, pass a HIPAA audit, or prove data integrity.

The fix: Log the full message payload at every stage — ingestion, transformation, and delivery. Store logs in an append-only, tamper-evident system. Treat your message log like a batch record.

Mistake #3: FHIR ≠ Secure by Default

FHIR R4 is a massive improvement over HL7 v2.x. It’s RESTful, JSON-based, human-readable, and far easier to work with. Many architects assume that because FHIR is modern, it’s also secure.

It is not secure by default.

Common FHIR security gaps I’ve seen in the wild:

Misconfigured OAuth scopes

FHIR’s SMART on FHIR framework uses OAuth 2.0 for authorization. But OAuth scopes that are too broad — patient/*.read instead of patient/Observation.read — give applications far more access than they need. This violates the principle of least privilege and dramatically expands your blast radius if a token is compromised.

Unauthenticated FHIR endpoints Publicly accessible FHIR servers — even in test/dev environments — are routinely discovered and scraped. A misconfigured dev endpoint with real patient data is a HIPAA breach waiting to happen.

Unvalidated resource references FHIR resources reference each other by ID. Without server-side validation, an attacker who can POST to a FHIR endpoint can craft references to resources they shouldn’t have access to — a classic broken object-level authorization (BOLA) vulnerability.

The fix: Enforce narrow OAuth scopes. Lock down every FHIR endpoint — especially dev/test. Validate all resource references server-side before returning data. Audit token usage regularly.

Mistake #4: Multi-Protocol Environments Multiply Risk

Modern clinical environments don’t run on a single protocol. They run on a mix of:

- HL7 v2.x from legacy analyzers and LIS systems

- ASTM E1381/E1394 from older lab instruments

- FHIR R4 from modern EHR integrations

- MQTT from IoT medical devices

Each protocol has its own security model — or lack thereof. The danger isn’t in any single protocol. It’s in the translation layer between them.

When a message moves from an ASTM instrument through an HL7 transformer into a FHIR resource, there are multiple points where:

- PHI can be inadvertently exposed in logs

- Data integrity can be silently corrupted during field mapping

- Access controls from one protocol don’t carry over to the next

Most organizations have strong controls at the endpoints but almost no controls at the translation layer — exactly where the data is most vulnerable and most likely to be incorrect.

The fix: Treat every translation point as a security boundary. Validate data integrity before and after transformation. Log the delta — what changed, when, and why. Apply access controls at the integration layer, not just at the source and destination.

Mistake #5: PHI in the Wrong Places

This one sounds obvious. It isn’t.

In a complex HL7/FHIR environment, PHI ends up in places nobody planned for:

- Error logs — A failed message parse dumps the raw HL7 payload, including patient name, DOB, and MRN, into an unencrypted log file

- Dead letter queues — Messages that fail to deliver sit in a queue, often with no access controls or retention policy

- Test environments — Production data copied to dev/test for debugging, with none of the production security controls in place

- Monitoring dashboards — Message content surfaced in an ops dashboard visible to staff without PHI access rights

Each of these is a HIPAA violation waiting to happen — and none of them show up in a standard security scan.

The fix: Audit every location where message content could be stored or surfaced. Mask or truncate PHI in error logs. Apply retention and access policies to dead letter queues. Never use real patient data in non-production environments.

The Core Problem: Security as Integration Tax

The root cause of most of these mistakes is cultural. In healthcare IT, integration projects are under constant pressure — go-live dates driven by clinical operations, vendor timelines, and regulatory mandates. Security controls are viewed as friction — something that slows down the integration and can be “added later.”

Later never comes.

By the time a system is in production, handling real patient data, serving clinical workflows, there is enormous organizational resistance to going back and adding encryption, tightening access controls, or rebuilding the audit trail. The cost of retrofitting security is always higher than the cost of building it in from the start.

The organizations that get this right treat security not as a feature to be added but as a property of the architecture — something that is designed in, validated, and continuously verified throughout the system’s lifetime.

The Bottom Line

Healthcare data deserves the most rigorous security posture of any data class. The combination of HIPAA obligations, the sensitivity of PHI, and the life-safety implications of clinical systems demands it.

But HL7 and FHIR architectures are routinely deployed with gaps that would be unacceptable in any other regulated industry.

The path forward isn’t more tools. It’s a shift in mindset — treating clinical data integration with the same discipline that pharmaceutical manufacturers apply to their validated systems. Every message is a record. Every transformation is a change event. Every access is auditable.

Because in healthcare IT, the data flowing through your pipes isn’t just sensitive. For some patients, it’s the difference between the right treatment and the wrong one.

Building or securing a clinical data integration platform? Drop a comment or connect — I’d love to compare notes on what’s working in the field.

References & Further Reading

Healthcare Data Value & Breach Statistics

- Verizon Data Breach Investigations Report (DBIR) 2024 — Human element in breaches https://www.verizon.com/business/resources/reports/dbir/

- Experian: “The Value of Healthcare Data on the Dark Web” — PHI vs. credit card pricing https://www.experian.com/blogs/ask-experian/why-is-medical-identity-theft-so-valuable-to-criminals/

- IBM Cost of a Data Breach Report 2024 — Healthcare remains the most expensive industry for breaches ($9.77M average) https://www.ibm.com/reports/data-breach

HL7 & MLLP Standards

- HL7 v2.x Transport Specification (MLLP) — HL7 International https://www.hl7.org/implement/standards/product_brief.cfm?product_id=55

- HL7 International Security Work Group https://www.hl7.org/Special/committees/secure/index.cfm

FHIR Security & SMART on FHIR

- HL7 FHIR R4 Security Documentation https://hl7.org/fhir/R4/security.html

- SMART App Launch Framework (OAuth 2.0 / FHIR) https://hl7.org/fhir/smart-app-launch/

- OWASP API Security Top 10 — API1:2023 Broken Object Level Authorization (BOLA) https://owasp.org/API-Security/editions/2023/en/0xa1-broken-object-level-authorization/

HIPAA Regulatory References

- HIPAA Security Rule — 45 CFR Part 164, Subpart C https://www.hhs.gov/hipaa/for-professionals/security/index.html

- 45 CFR § 164.312(b) — Audit Controls (technical safeguard requiring audit trail) https://www.ecfr.gov/current/title-45/subtitle-A/subchapter-C/part-164/subpart-C/section-164.312

- HHS Office for Civil Rights — HIPAA Breach Notification Rule https://www.hhs.gov/hipaa/for-professionals/breach-notification/index.html

ONC & CMS Interoperability

- ONC Interoperability and Patient Access Final Rule (FHIR mandate) https://www.healthit.gov/topic/interoperability/policy/final-rule-implementing-21st-century-cures-act

- CMS Interoperability and Patient Access Rule (CMS-9115-F) https://www.cms.gov/regulations-and-guidance/guidance/interoperability/index

Infrastructure Security Best Practices

- NIST SP 800-66 Rev. 2 — Implementing the HIPAA Security Rule https://csrc.nist.gov/publications/detail/sp/800-66/rev-2/final

- NIST SP 800-204 — Security Strategies for Microservices-based Application Systems (applicable to FHIR APIs) https://csrc.nist.gov/publications/detail/sp/800-204/final

#HealthcareIT #HL7 #FHIR #HIPAA #CyberSecurity #ClinicalIntegration #DataSecurity #PHI #HealthTech #EnterpriseIT