Author: Brian Kuzdas | Enterprise IT Architect LinkedIn: linkedin.com/in/brian-kuzdas-a176b945

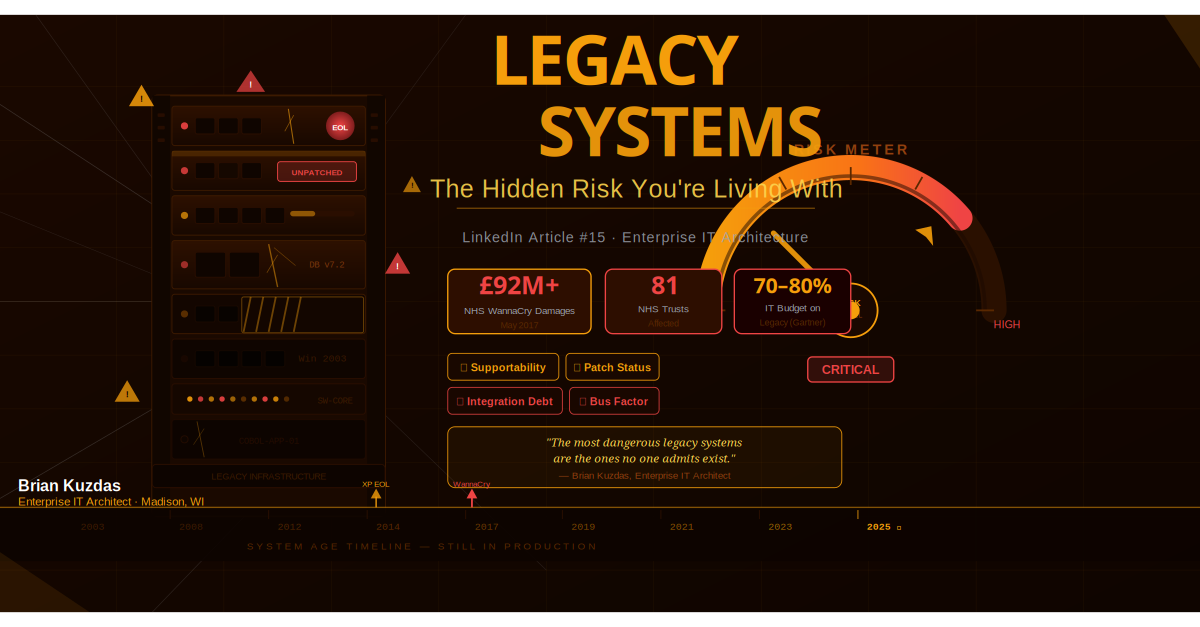

On May 12, 2017, a Friday afternoon, ransomware called WannaCry began tearing through networks across the globe. In the United Kingdom, 81 NHS trusts were affected within hours. Hospitals locked staff out of patient records. Ambulances were diverted. Surgeries were cancelled. CT scanners, MRI machines, and the digital infrastructure holding the country’s largest healthcare system together went dark — not from a sophisticated nation-state cyberweapon, but from a vulnerability that Microsoft had patched two months earlier.

The final toll: 19,000 appointments cancelled, over £92 million in damages, and a National Audit Office investigation that laid bare an uncomfortable truth. NHS systems were running Windows XP — an operating system Microsoft had ended support for in April 2014. Three years earlier. The exploit used, EternalBlue (MS17-010), had a patch available since March 2017. Two months before the attack. Hospitals were hit not because defenders were unaware — they were warned — but because patching legacy systems in large, complex healthcare organizations is not a simple task. It requires funding, change control processes, downtime windows, and above all, organizational will.

The WannaCry attack on the NHS is not a cautionary tale from a distant past. It is a template for exactly what is happening right now in enterprise IT environments around the world. Every organization of sufficient size has legacy systems — some visible, many invisible. Some are maintained, most are not. Some are documented, others exist only in the institutional memory of a single engineer who joined before the current CISO was born.

This article is about that problem: the hidden risk sitting inside your infrastructure, aging quietly, collecting vulnerabilities, and waiting for someone to exploit it. It is also about what you can do about it — practically, defensibly, and without abandoning the operational continuity your business depends on.

How to Identify Legacy Risk: It’s Not Just About Age

The first mistake most organizations make when thinking about legacy systems is conflating age with risk. Age is a proxy — it correlates with risk, but it does not define it. A 15-year-old application running on a fully supported, actively patched platform with modern authentication and strong network segmentation is infinitely less dangerous than a 3-year-old application running on an end-of-life OS, internet-facing, with no monitoring and a single developer who understands how it works.

True legacy risk assessment requires four dimensions, each of which must be evaluated independently.

Supportability asks the most basic question: is anyone still responsible for securing this system? This means checking vendor end-of-life (EOL) dates for every layer of the stack — the operating system, middleware, runtime frameworks, database engine, and third-party libraries. Tools like endoflife.date provide a consolidated view of EOL timelines across hundreds of platforms. CISA maintains a Known Exploited Vulnerabilities (KEV) catalog that documents CVEs actively being weaponized in the wild. Cross-referencing your asset inventory against both is a powerful starting point.

The critical insight about EOL is this: it doesn’t mean the system stops working. It means that when new vulnerabilities are discovered — and they will be — no patch will be issued. You are flying blind. Every day after EOL, the system becomes incrementally less defensible. When a CVE is published for an EOL component, there is no fix. Your only options are compensating controls, workarounds, or migration. And compensating controls are not free.

Patch Status goes beyond a binary “patched or not” assessment. The relevant question is: how far behind is this system, and what does that exposure look like in terms of CVE coverage? A system three months behind on patches with two unresolved CVEs — one of which is a medium-severity information disclosure — is a very different risk profile from a system running a two-year-old patch level with 200+ unpatched vulnerabilities including multiple critical remote code execution flaws. Vulnerability scanners like Tenable Nessus, Qualys, or Rapid7 InsightVM can produce aging reports that tell you not just what’s unpatched but the cumulative CVSS score exposure across your asset inventory.

Integration Debt is the dimension most often overlooked in legacy risk conversations, and it is frequently what makes remediation genuinely hard. Before you can retire or migrate a legacy system, you need to understand its blast radius — how many other systems depend on it? In complex enterprise environments, a single legacy application may be the source of truth for dozens of downstream integrations. APIs may be calling it. Batch processes may depend on its file export formats. Other applications may authenticate through it. Mapping this dependency graph is often the most time-consuming part of a legacy modernization project, and organizations that skip this step discover the dependencies the hard way — mid-migration, when something breaks in production.

Knowledge Retention is the human dimension of legacy risk — and in many organizations, it is the most acute. The “bus factor” is a crude but useful concept: how many people on your team need to be unavailable before you cannot maintain a given system? For many legacy applications, that number is one. There is frequently one engineer — often approaching retirement age — who wrote the original system or has maintained it for two decades, who holds the institutional knowledge that makes it run. When that person leaves, the organization discovers that the documentation is sparse, the code is uncommented, and the operational runbooks are either missing or catastrophically out of date.

The legacy risk assessment, properly done, is a matrix across all four dimensions for every system in your inventory. It is not a one-time exercise — it should be maintained continuously as systems age, vendors change support commitments, and staff turn over. Organizations that do this well have a real-time view of their technical debt and can make prioritization decisions based on data rather than gut instinct.

The Real Cost of Doing Nothing

The phrase “if it ain’t broke, don’t fix it” is one of the most expensive axioms in enterprise IT. When applied to legacy systems, it consistently underestimates four categories of cost that compound over time.

Operational Risk manifests first in subtle ways: slower response times, intermittent failures, error rates that edge upward year over year. Legacy systems weren’t designed for modern workloads. Hardware components age and fail. Mean time to repair (MTTR) increases as replacement parts become harder to source, vendor support contracts expire, and the pool of engineers who know the system shrinks. Organizations that have run aging IBM AS/400 or Unix systems for decades can tell you about the increasingly frantic calls to find replacement drives or memory that are no longer manufactured. The operational risk calculates not just downtime probability but the cost of each downtime event — and in environments where system availability is tied directly to revenue or patient care, that cost can be enormous.

Security Risk is the most quantifiable dimension, and the math is uncomfortable. Take the average CVSS score of your unpatched vulnerabilities, multiply by the number of unpatched CVEs on a given system, and then factor in exposure — is this system internet-facing? Does it hold sensitive data? Is it in your network core or isolated in a DMZ? The IBM/Ponemon Cost of a Data Breach Report for 2023 puts the average cost of a data breach in the United States at $9.48 million. Organizations with high levels of technical debt — measured in part by unpatched systems — consistently show higher breach costs and longer breach detection cycles. The correlation is not accidental. Unpatched systems are easier to exploit, and easier to exploit means breaches go deeper and linger longer before detection.

Talent Risk is the category most executives underestimate. Modern engineers do not want to maintain COBOL, Oracle Forms, Lotus Notes, or Windows Server 2003. The developer and systems engineer talent markets have become highly competitive, and candidates evaluate technology stacks as part of their job decisions. Organizations with heavy legacy footprints struggle to attract junior engineers — why learn a dead language for a dead system? — and retain senior engineers, who leave for organizations investing in modern platforms. The institutional knowledge problem compounds with every departure. Stack Overflow’s annual Developer Survey consistently shows that legacy languages and platforms rank near the bottom of “technologies engineers want to work with” — and near the top of “technologies they are working with.” That gap represents talent attrition risk.

Forced Migration Risk is perhaps the most financially damaging category, and the most avoidable. When a vendor announces an end-of-life date, organizations that have been ignoring their legacy debt suddenly face a deadline. When a critical security incident forces emergency action — as WannaCry did for NHS trusts — the migration is no longer a planned project with appropriate discovery, testing, and change management. It is an emergency response. Emergency migrations are estimated by industry analysts to cost 3–5 times more than planned migrations, because they compress timelines, skip testing, require premium consulting rates, and introduce their own operational risks.

Gartner has estimated that 70 to 80 percent of enterprise IT budgets are consumed by maintaining existing systems — the running-in-place cost of keeping legacy infrastructure operational. That is IT spending that could be funding innovation, capability improvement, and competitive differentiation. The cost of doing nothing is not zero. It is the slow, compounding accumulation of technical debt that eventually becomes a crisis — and crises are expensive.

Legacy Risk in FDA-Regulated Environments: The “Frozen in Amber” Problem

For organizations operating in FDA-regulated environments — pharmaceutical manufacturers, medical device companies, clinical laboratories, and contract research organizations — legacy risk takes on a dimension that requires specific attention. I have spent a significant portion of my career working in these environments, and the challenge is one I call the “frozen in amber” problem.

In FDA Computer System Validation (CSV) environments, computerized systems used in GxP processes — those that affect product quality, patient safety, or data integrity — must be validated. Validation, in the FDA’s framework, involves Installation Qualification (IQ), Operational Qualification (OQ), and Performance Qualification (PQ): documented evidence that the system was installed correctly, operates as intended, and performs reliably in its intended use. This validation documentation is often extensive, time-consuming to produce, and expensive to maintain.

The problem emerges at the intersection of validation and patching. Many organizations interpret FDA guidance — particularly 21 CFR Part 11 and the FDA Guidance for Industry: General Principles of Software Validation — as requiring re-validation whenever a validated system is modified. Patches modify systems. Therefore, patching a validated system triggers re-validation. Re-validation is expensive. Therefore, don’t patch the validated system.

This logic leads directly to systems that are, in the most literal sense, frozen in amber. They cannot be updated. They cannot be patched. They run in an increasingly hazardous state as their unpatched CVE inventory grows, while the organization insists that the FDA requires this posture. The system that was validated in 2012 may be running on an operating system that has been end-of-life for eight years. It may have hundreds of unpatched vulnerabilities. And because it is “validated,” the organization believes it is untouchable.

This interpretation of FDA guidance is incorrect — and dangerously so.

The FDA’s General Principles of Software Validation guidance explicitly endorses a risk-based approach. Patches do not automatically require full re-validation. The standard requires that organizations assess the impact of changes and document their assessment. For security patches affecting non-GxP components — operating system updates, network stack patches, firmware updates to hardware that does not affect validated software behavior — a documented impact assessment and regression testing of validated functionality is frequently sufficient. The organization must be able to demonstrate that the validated functions still operate correctly after the patch is applied. That is a very different requirement from full IQ/OQ/PQ re-execution.

GAMP 5 (Good Automated Manufacturing Practice, 5th edition), published by the International Society for Pharmaceutical Engineering (ISPE), provides detailed guidance on change control for validated computerized systems. GAMP 5 explicitly describes a risk-based change management process in which changes are categorized by their potential impact on validated functionality, and the level of documentation and testing required is proportional to that impact. A security patch that addresses a vulnerability in the Windows network stack, with no changes to application logic or user interface, may require only a documented impact assessment and a smoke test of validated workflows.

Organizations that adopt a risk-based patching posture for FDA-validated systems — and document it rigorously — can maintain both their security posture and their validation compliance. The FDA has not issued guidance saying validated systems may not be patched. On the contrary, FDA warning letters have cited organizations for inadequate attention to cybersecurity risk in computerized systems. The regulatory risk of an unpatched, EOL system being the entry point for a data breach or ransomware attack in a GxP environment is significant — not just from a cybersecurity perspective but from a data integrity standpoint, which is one of the FDA’s primary concerns.

The “frozen in amber” problem is solvable. It requires organizational maturity, the willingness to engage quality assurance teams in a conversation about risk-based change control, and a clear policy framework that distinguishes between changes requiring full re-validation and changes requiring only documented impact assessment. In my experience, organizations that do this work find that the vast majority of routine security patches fall into the latter category.

The Five Questions to Ask About Every Legacy System

A practical legacy risk assessment doesn’t require a six-month consulting engagement. It requires asking five questions about every system in your inventory — and having the organizational discipline to act on the answers.

1. Is it still vendor-supported?

This is the baseline question. Check the vendor’s support matrix. Cross-reference against endoflife.date, which aggregates EOL data for operating systems, programming languages, frameworks, databases, and hardware. Check CISA’s Known Exploited Vulnerabilities catalog to see whether any CVEs affecting your system have been observed in active exploitation. If the system is running on EOL components, document it explicitly and flag it for risk disposition. “We didn’t know” is not an acceptable answer when a CVE that appeared in the KEV catalog six months ago becomes the entry point for a breach.

2. When was it last patched, and what is the CVE exposure?

Run a vulnerability scan — Tenable Nessus, Qualys VMDR, or Rapid7 InsightVM — and get a current count of unpatched CVEs by severity. Calculate your exposure window: how many days since the last critical-severity patch was applied? For each critical or high-severity CVE, note whether it is included in CISA’s KEV catalog. KEV membership means the vulnerability is being actively exploited in the wild, which dramatically elevates risk. This data should be in a system owner’s hands, reviewed at least quarterly.

3. Can it be isolated if compromised?

Network segmentation is one of the most effective compensating controls for systems that cannot be immediately patched or migrated. The question is whether your legacy system sits in a flat network architecture — where a compromise propagates laterally across your entire environment — or whether it can be placed in a VLAN or microsegment with strict ingress/egress controls. East-west traffic monitoring and microsegmentation (using tools like Illumio, Guardicore, or native cloud security groups) can dramatically limit the blast radius of a legacy system compromise. If your legacy system is in the same network segment as your crown jewels, that is an urgent remediation priority regardless of the migration timeline.

4. What is the annual risk cost versus migration cost?

Frame this as a financial comparison. Estimated annual risk cost equals the probability of a breach multiplied by the expected cost of a breach for a system of this type, plus the ongoing operational overhead cost. Migration cost is the fully-loaded project cost amortized over the expected life of the replacement system. In many cases, organizations that run this calculation discover that the annual carrying cost of the risk premium on a legacy system exceeds the annualized migration cost — particularly when cyber insurance premium increases for EOL software are factored in. This framing speaks directly to CFO decision-making.

5. Does the business even know they own it?

”In my experience, the most dangerous legacy systems are the ones no one admits exist.”

Shadow IT legacy systems are more common than most security programs account for. Systems acquired through mergers and acquisitions that were never fully inventoried. Departmental applications purchased outside the formal IT procurement process ten years ago, still running in production on a server tucked in a closet. Long-forgotten projects whose decommissioning was planned but never executed. An automated asset discovery capability — Axonius, Tenable.sc’s asset correlation, or ServiceNow’s CMDB — combined with network scanning is essential for finding these systems. You cannot protect what you cannot see. And the systems no one can see are the ones attackers will find first.

How to Make the Business Case for Legacy Modernization

Technical debt discussions frequently die in executive staff meetings because they are framed in technical terms executives do not internalize as financial risk. Effective legacy modernization business cases translate technical debt into the financial, regulatory, and operational language that drives investment decisions.

Cyber Insurance Implications

The cyber insurance market has undergone a significant hardening over the past three years. Insurers are now explicitly asking, in policy applications, about end-of-life software in production environments. Policy language in major commercial cyber insurance products increasingly includes exclusions or premium adjustments for organizations running EOL operating systems or applications with known unpatched critical vulnerabilities. Some insurers have denied claims outright when post-incident forensics revealed that the breach entry point was an EOL, unpatched system. Framing legacy modernization as a prerequisite for maintaining cyber insurance coverage at acceptable premium rates is one of the most effective arguments available to a CISO making a board-level presentation.

Regulatory Risk

Regulatory bodies have shown increasing willingness to treat unpatched, EOL systems as evidence of inadequate security practices. The FTC’s enforcement action against Wyndham Hotels (FTC v. Wyndham Worldwide Corporation) established that failure to implement reasonable security measures — including failure to patch known vulnerabilities — constitutes an unfair trade practice under Section 5 of the FTC Act. The HHS Office for Civil Rights (OCR) has cited unpatched vulnerabilities in HIPAA enforcement actions. The SEC has made clear, in its cybersecurity disclosure rules for public companies, that material cybersecurity risks — including known vulnerabilities in legacy systems — may require disclosure. When a breach occurs and the entry point is an EOL system with a documented unpatched CVE, regulators will ask why it was not addressed. The answer needs to be better than “we didn’t get to it.”

Talent Acquisition and Retention

Engineering and IT talent surveys consistently show that technology stack modernity is a significant factor in developer and systems engineer job decisions. Organizations carrying heavy legacy debt struggle to recruit junior talent and retain senior talent. The cost of a vacant senior engineering position — including recruiting fees, time-to-fill, and productivity loss — often exceeds $100,000 in fully loaded terms. When multiplied across multiple positions vacated because engineers declined to maintain legacy systems, the talent cost of technical debt becomes a meaningful line item in the modernization business case.

The Total Cost of Ownership Comparison

Build a five-year TCO model comparing legacy maintenance against modernization investment. Legacy TCO includes: vendor support contract costs (often premium rates for out-of-support software), in-house maintenance labor, operational risk premium (expected cost of incidents multiplied by probability), cyber insurance premium increases for EOL software, and talent cost premiums. Modernization TCO includes: migration project cost, new platform licensing, implementation labor, and ongoing maintenance — typically lower for modern platforms with active vendor support and broader talent pools. Amortized over five years, modernization frequently delivers positive ROI — particularly when the risk premiums for legacy systems are calculated at realistic probability rates rather than optimistic ones.

A Practical Legacy Remediation Framework

Knowing that legacy risk exists is the beginning. Acting on it requires a structured process that is repeatable, defensible, and aligned with how organizations actually make and execute decisions.

Phase 1: Discovery

You cannot manage what you cannot see. The first step is a comprehensive, automated asset inventory. Tools like Tenable.sc, Axonius, and ServiceNow CMDB provide agent-based and agentless discovery across network-connected assets. The goal is a complete catalog of every system — hardware model, operating system and version, installed applications and versions, network location, last seen date, and assigned owner. Critically, this discovery must include scanning for shadow IT: run discovery against all subnets in your environment, not just the ones in your CMDB. The systems not in the CMDB are the ones most likely to be running EOL software and least likely to have been patched recently.

Phase 2: Risk Scoring

Score each discovered system on five dimensions, using a 0–5 scale for each, for a maximum score of 25:

- Supportability (0–5): 5 = fully end-of-life, no patches available. 0 = current vendor LTS, patches available.

- Patch Status (0–5): 5 = critical unpatched CVEs present, >180 days since last patch. 0 = fully current, patched within 30 days.

- Data Sensitivity (0–5): 5 = processes regulated or highly sensitive data (PII, PHI, IP). 0 = no sensitive data.

- Network Exposure (0–5): 5 = internet-facing, no segmentation. 0 = isolated VLAN, no inbound external access.

- Knowledge Retention (0–5): 5 = bus factor of 1, person approaching retirement, no documentation. 0 = fully documented, multiple qualified maintainers.

Systems scoring 20 or above are critical priority. Systems scoring 15–19 are high priority. 10–14 medium priority. Below 10 are candidates for periodic review.

Phase 3: Disposition Decision

For each system, one of four dispositions is assigned:

- Modernize: Replatform or redevelop. Highest cost, highest long-term value. Appropriate for systems that are core to business function and must evolve with the business. Typically involves a formal project with architecture design, development, testing, and migration.

- Migrate: Lift-and-shift to a supported platform — for example, moving an application from Windows Server 2003 to Windows Server 2022 without rewriting the application. Lower cost than full modernization, maintains functionality, eliminates the EOL OS risk. Appropriate when the application logic is sound but the underlying platform is the risk.

- Retire: Decommission entirely. Migrate data to a successor system or archive it per records retention policies. Appropriate when the business function the system served is no longer needed, or when a modern replacement already exists and data migration is the only remaining obstacle.

- Accept: Document the risk, apply compensating controls, and monitor. Appropriate as a temporary measure for systems where migration is planned but not yet funded, or for low-criticality systems where risk is genuinely acceptable. Compensating controls must be real: network segmentation, enhanced monitoring, WAF or IPS in front of the system, privileged access management for administrative access, and regular review cadence.

Phase 4: Roadmap and Governance

Build a three-year legacy reduction roadmap aligned to budget cycles. Track it quarterly in a risk register that is visible to executive leadership and, where appropriate, to the board. Report progress in terms of risk reduction — systems moved to supported platforms, CVE exposure reduced, risk scores improved — not just project milestones. Normalize the conversation: legacy debt is a business risk that belongs in the same governance forums as financial and operational risk, not just in IT team meetings.

Bottom Line: Five Takeaways

Legacy systems are a form of borrowed time. Every day a system runs on an EOL platform without compensating controls is a day the organization is accepting risk it may not have quantified. The WannaCry attack on the NHS was preventable — a patch was available, the vulnerability was documented, and the warning was given. The gap between knowing and acting is where most legacy risk lives.

1. Age is not the risk — EOL and patch status are. Evaluate every system on supportability, patch currency, integration debt, and knowledge retention. Build a real-time risk register.

2. The cost of doing nothing is not zero. Operational degradation, security exposure, talent attrition, and forced emergency migration all compound over time. Model the risk cost explicitly.

3. In FDA environments, “validated” does not mean “unpatchable.” A risk-based approach to patch impact assessment, documented per GAMP 5, allows validated systems to be kept current without full re-validation of every change.

4. Compensating controls buy time — they don’t eliminate risk. Network segmentation, enhanced monitoring, and WAF/IPS can reduce exposure, but they are not substitutes for remediation. Accept risk deliberately and with a migration timeline attached.

5. The business case is financial, not technical. Frame legacy debt in terms of cyber insurance premiums, regulatory exposure, talent cost, and TCO comparison. Executive decisions respond to financial risk language.

Technical debt is not a technology problem. It is a business risk problem that happens to live in technology. The organizations that manage it well are the ones that bring it into the open — name it, quantify it, and make deliberate decisions about it. The ones that don’t are the ones who find out what it costs in the worst possible way.

Citations and References

-

UK National Audit Office. Investigation: WannaCry cyber attack and the NHS. October 2017. Available at: nao.org.uk

-

Gartner. IT Key Metrics Data: Measuring the Cost of Technical Debt. Gartner Research, multiple years.

-

Cybersecurity and Infrastructure Security Agency (CISA). Known Exploited Vulnerabilities Catalog. Available at: cisa.gov/known-exploited-vulnerabilities-catalog

-

Cybersecurity and Infrastructure Security Agency (CISA). End-of-Life Software Guidance. Available at: cisa.gov

-

U.S. Food and Drug Administration. 21 CFR Part 11: Electronic Records; Electronic Signatures. Available at: fda.gov

-

U.S. Food and Drug Administration. General Principles of Software Validation; Final Guidance for Industry and FDA Staff. January 2002. Available at: fda.gov

-

International Society for Pharmaceutical Engineering (ISPE). GAMP 5: A Risk-Based Approach to Compliant GxP Computerized Systems. Second Edition, 2022.

-

IBM and Ponemon Institute. Cost of a Data Breach Report 2023. Available at: ibm.com/security/data-breach

-

endoflife.date. End of Life software tracker. Available at: endoflife.date

-

Federal Trade Commission. FTC v. Wyndham Worldwide Corporation. U.S. Court of Appeals, Third Circuit, 2015.

#LegacySystems #TechnicalDebt #CyberSecurity #RiskManagement #EnterpriseIT #FDACSV #CloudMigration #InfoSec #ITModernization #DigitalTransformation